How to use Milvus vector database to store and retrieve LLM embeddings using LangChain

This tutorial will guide you through the process of setting up the Milvus Vector Database and querying stored embeddings using the LangChain framework.

In this tutorial, we're going to do the following:

- Go over key concepts

- Install the Milvus vector database

- Create and store vector embeddings

- Perform similarity search using LangChain + Milvus

Background

In the last few years, we've been creating and consuming insane amounts of data from many sources. Think of all the emails we send and receive, social interactions, messages, photos, videos, and so many other things...

How to make sense of all of this information? The advancements in Machine Learning and Deep Learning allow us to understand and interpret all of this unstructured data by converting it into Vector Embeddings.

Vector Embeddings

Introduction to Vector Embeddings

It's simple really. Ask yourself how would you go about storing two images in a database and comparing whether they have similar features. Well?

One way is to use Vector Embeddings.

Images, as well as other types of unstructured data, can be transformed into numerical representation which is precisely what Vector Embeddings represent.

As you can see in the diagram above, the text "anatine amigos" goes into an Embedding model which then outputs a bunch of numbers aka vectors.

Benefits of Vector Embeddings

Some of the benefits of using Vector Embeddings:

- Similarity Search

- Anomaly Detection

- Natural Language Processing (NLP) Tasks

Great, now let's assume we've converted data into vector embedding. How about storing them in a SQL or NoSQL database? While possible, it's best to use a specialized type of database for this.

Vector Database Management Systems (VDBMS)

Introduction to Vector Databases

Just like we have traditional SQL databases that handle storing structured data (i.e. columns and rows), in this day and age, we need specialized databases capable of handling storing embeddings.

Simply put, a Vector Database is a type of database that is designed to efficiently store, query, and manipulate vector embeddings.

Hello Milvus

Introduction to the Milvus Vector Database

Milvus was created in 2019 with a singular goal: store, index, and manage massive embedding vectors generated by deep neural networks and other machine learning (ML) models. - https://milvus.io/docs/overview.md

Milvus is a popular Vector Database designed to handle embeddings. It is built primarily to perform optimized vector operations.

Milvus Vector Database Pricing

Well, it cost 0$. Milvus is open-source and free to use.

Zilliz Cloud is a fully managed option offered by Zilliz, the company behind Milvus. They offer a free starter option as well as other paid plans listed below:

- Standard: Starting $65/month

- Enterprise: Starting $99/month

For an updated and complete list of features, please check out their pricing page.

Installing Milvus Vector Database

Let's go ahead and install Milvus Database. The below has been tested on my macOS machine and the steps taken from the official docs.

- Create a new directory on your machine, we'll call it

milvus-demo:

mkdir milvus-demo && cd milvus-demo- Download the

milvus-standalone-docker-compose.ymlfrom this URL - Save it in your

milvus-demodirectory - Rename it to

docker-compose.yml:

mv milvus-standalone-docker-compose.yml docker-compose.yml- Start Milvus by running:

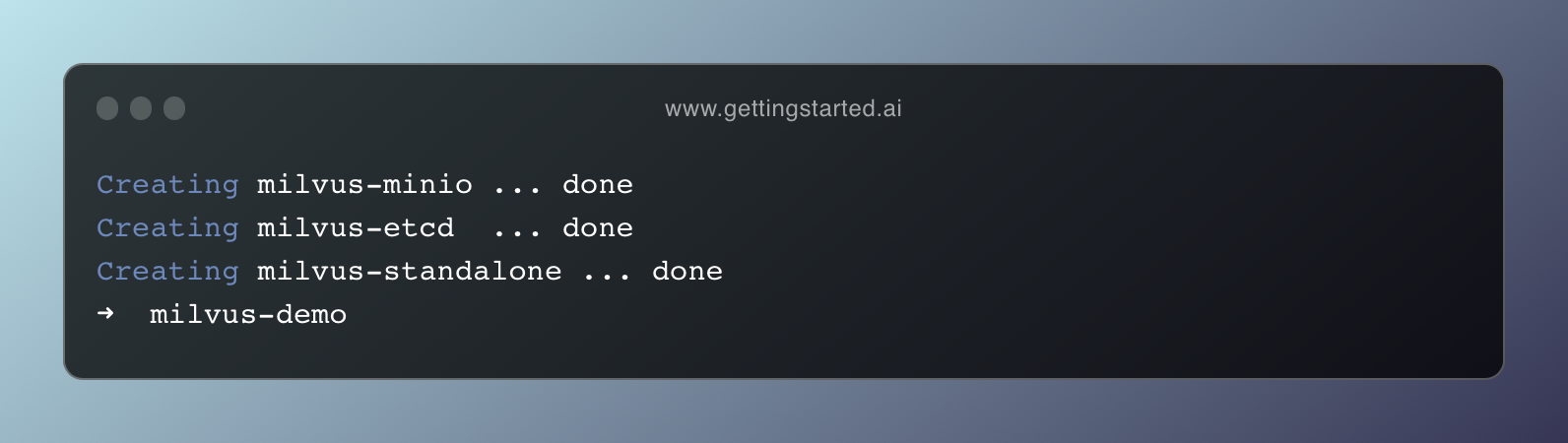

docker-compose up -d- Installation will start. Once done, you should have something similar to this:

- Find the port being used by the

milvus-standaloneprocesses:

docker compose ps

>>> milvus-standalone "/tini -- milvus run…" standalone running (healthy) 0.0.0.0:9091->9091/tcp, 0.0.0.0:19530->19530/tcp- Connect to Milvus:

docker port milvus-standalone 19530/tcp

>>> 0.0.0.0:19530In my case that's the IP/port that I can use to connect to Milvus (0.0.0.0:19530).

Example Integration with LangChain

What is LangChain? Start here.

For this example, we'll be using:

- The Milvus Instance (from above)

- OpenAI API Key to generate embeddings

- Visual Studio Code (or any IDE of choice)

Set up API Key

Start by getting your API key from this page. Let's then include it in our bash variables by entering the below into our terminal:

export OPENAI_API_KEY="..."Setting OPENAI_API_KEY bash variable

Let's create our main.py file. In our milvus-demo directory type and enter:

touch main.pyCreate a new main.py file

code . into your terminal. Install and Import Required Modules

First, we'll need to install the Python SDK for Milvus Vector Database: pymilvus. In the root directory, let's go ahead and install it using pip:

pip install pymilvusNext, in our main.py file, add the following imports:

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import CharacterTextSplitter

from langchain.vectorstores import Milvus

from langchain.document_loaders import TextLoader

What we're doing here is just import OpenAIEmbeddings, CharacterTextSplitter, Milvus, and TextLoader. These modules will help us load text from a file, split it into chunks, and then create embeddings from the chunks.

Load and Split Text from File

Download the state_of_the_union.txt file from this URL and add it to your project directory.

# Load the text from the downloaded file

loader = TextLoader("state_of_the_union.txt")

documents = loader.load()

# Split the text into chunks

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

docs = text_splitter.split_documents(documents)

# Initialize OpenAIEmbeddings

# This will use text-embedding-ada-002 model by default

embeddings = OpenAIEmbeddings()Initialize our Milvus Vector Database

To connect to our running Milvus Instance, LangChain provides the Milvus which we imported from langchain.vectorstores package.

We'll use the from_documents method and pass the docs, and embeddings as shown below:

vector_db = Milvus.from_documents(

docs,

embeddings,

connection_args={"host": "0.0.0.0", "port": "19530"},

)Initialize vector_db

Behind the scenes, LangChain passes the text chunks to the OpenAI embedding model, which is then stored in our Milvus instance.

host and port values match what you get from Step 8 above. In my case, the result was: 0.0.0.0:19530.Perform a Similarity Search

Now that we have our embeddings stored neatly in the Vector database, let's go ahead and perform a similarity search (also known as the nearest neighbour search).

If you're not familiar with the term, Similarity Search is an operation that finds similar vectors in a database given a query vector. This means that our query will be vectorized, and then compared with the stored vectors to find the most relevant ones from the database.

Common use cases include recommendation systems, content retrieval, image search, and more.

Here's how we can perform a similarity search using our vector_db object. We'll simply write a query and pass it to the similarity_search method as such:

query = "What is this document about?"

docs = vector_db.similarity_search(query)Perform a Similarity Search (aka Nearest Neighbour Search)

Now the docs object contains a list of the most relevant content based on the query. Here's how to peek at the first item in the docs:

print(docs[0].page_content)Print the first item from the docs list

Try to play around with the query and see how the values change based on your input. You may decide to build a chain that will send the page_content to a LLM for further processing or for any other use case.

For more methods, please look at the API definition here as well as the official LangChain docs here.

Putting It All Together

Final Thoughts

This tutorial sets you up with the basics to get started with the Milvus Vector Database using LangChain.

Depending on your application requirements you'll most likely need to set up additional chains and/or agents. To dive deeper into LangChain, I highly suggest you get started here:

If you have any questions, please leave a comment below or connect with me on X and I'd love to assist further where possible.

Thanks for reading!

Further readings

More from Getting Started with AI

- New to LangChain? Start here!

- How to Convert Natural Language Text to SQL using LangChain

- What is the Difference Between LlamaIndex and LangChain

- An introduction to RAG tools and frameworks: Haystack, LangChain, and LlamaIndex

- A comparison between OpenAI GPTs and its open-source alternative LangChain OpenGPTs